Ever wanted to get the read rates of your disk? with Bash and R and ggplot?

No problem!

Here is the bash script to gather data:

#!/bin/bash

# written by reox 2015

# test the read speed of your disk.

# this script will test random access, you can remove the shuf command in the for loop to have linear access too.

# after you run the script, plot the data with your favourite plotting lib.

set +x

set +e

sectors=$(fdisk -l $1 | egrep -o "[0-9]+ sectors" | cut -f 1 -d " ")

size=$(fdisk -l $1 | egrep "Sector size" | cut -d " " -f 7)

# for 512b blocksize, read 64k at once

blocksatonce=65536

# read 128 blocks and skip 65536. So the process will be faster because we do not need to read the whole HD

readatonce=128

echo "Testing Disk, $sectors Sectors, $size Blocksize, reading $blocksatonce Blocks at once. Need $(($sectors/$blocksatonce)) dd commands." 1>&2

echo "offset,seconds,speed,type"

for offset in $(seq 0 $(($blocksatonce)) $(($sectors)) | shuf); do

echo -n $offset,

dd if=$1 skip=$offset ibs=$size count=$readatonce of=/dev/null 2>&1 | tail -n +3 | awk -F ',' '{ print $2 "," $3 ", random"}'

done

for offset in $(seq 0 $(($blocksatonce)) $(($sectors))); do

echo -n $offset,

dd if=$1 skip=$offset ibs=$size count=$readatonce of=/dev/null 2>&1 | tail -n +3 | awk -F ',' '{ print $2 "," $3 ", linear"}'

done

Create the logfile:

./testmydisk /dev/sda | sed -e 's/ s,/,/' > logfile

Then startup R and plot the things:

library(ggplot2)

library(sitools)

data <- read.csv("logfile")

d <- data.frame(offset=512*data$offset, rate=(512*128)/data$seconds,type=data$type)

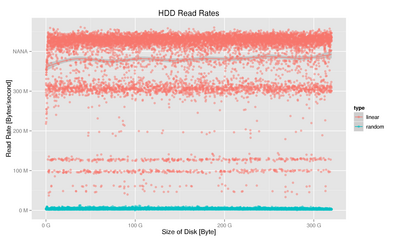

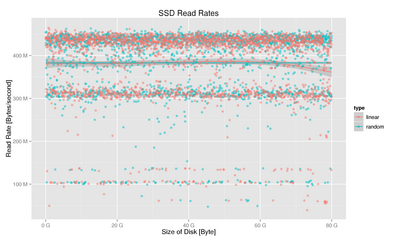

ggplot(d, aes(x=offset,y=rate,colour=type)) + geom_smooth() + geom_point(alpha=0.5) + scale_y_continuous(labels=f2si) + scale_x_continuous(labels=f2si) + xlab("Size of Disk [Byte]") + ylab("Read Rate [Bytes/second]") + ggtitle("HDD Read Rates")

And here are two plots i made on my laptop with internal SSD and HDD.

By the way, you can clearly see the advantage of a SSD over HDD in random access - while linear access is pretty much the same. I did not found out yet how to measure access time in a good way... The result of bytes per second in the calculation is not that great. It takes the time for the whole read with dd and not just the time it took to actually read the data... but anyway. you should also take several measurements and average them. Better run it on a PC without running anything else... disclaimer: this script is just for demonstrational purposes :)